Going Back to Old Designs

Introduction:

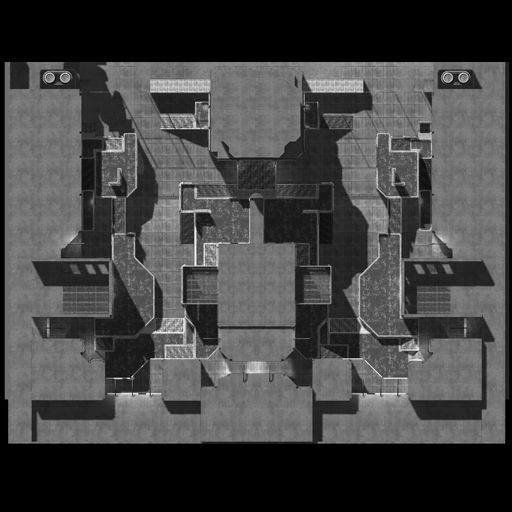

When designing games sometimes you have an idea that you flesh out and after a while you decide that the idea is not right for this particular game. The worst thing you can do in this situation is scrap the idea completely and never look back at it, sometimes the best designs come from ideas that you didn’t like initially. This recently happened to me when I was working on level designs for Space Dunk. One of my first ideas was a diamond shaped arena. The initial idea did not test well at QA, players were having a lot of trouble figuring out where to go. The level was taken out of the game but recently I revisited it and came up with two different levels based on my initial design.

What to think about when revisiting concepts:

The first two things I like to consider when revisiting an old concept are: what was my original goal for this level and what part of the original idea did not work. I like to think about my goal for the level when revisiting it because it allows me to remember why I made the design choices I did the first time I was designing the level. This also allows you to think about better ways to achieve the same goal and it also allows you to reconsider the goal of your level and possibly change it. When you look at why a level did not work its important to look at it objectively, its easy to say a level didn’t work because it was a bad design but its much harder to look at the specifics of why it didn’t work. When I looked back at my diamond level I realized that one problem was that it was just too big, by making the level smaller it helped the players navigate the space better. The other major problem with the level that I discovered was that it was too cluttered so the players could not see the other teams goals easily. Since the goal of the game is to score in the other teams goal not being able to see the objective caused players to feel lost.

Making Changes:

The first thing I do when making changes to a level concept I am revisiting is save a new copy of the level, that way I still have the old version before I start making changes. When I was making changes to the diamond Space Dunk level one thing I did was scale the level down to help with navigation. I also rotated the entire arena and removed most of the objects out of the middle so both teams could see the goals at all times. This allowed me to drastically change the level pretty quickly and get it ready for testing again. The only was to find out if the changes you make are for the better is to test them.

Conclusion:

Looking at old concepts is a good way to inspire yourself but it is important to remember that not every idea you have is going to be salvageable, this does not mean that you can’t learn from the concept. The first few levels I ever designed and created were really terrible but I learned a lot from making them and I learned a lot more from revisiting them and playing them. When you return to an old project or concept and you have distanced yourself from the feelings you had towards it when you were first making it you have the potential to learn a lot from the mistakes and the successes from your past.